Research

My research sits at the intersection of Human-Computer Interaction (HCI) and Applied Machine Learning, guided by two core questions: how can user interfaces be augmented with intelligence to support interaction? and what insights about human behavior can be derived from interaction and sensor data? To address these questions, my work spans multimodal and implicit interaction, intelligent interfaces, reading and note-taking in digital environments, physiological user modeling, and creativity support tools — with the goal of designing systems that are both technically robust and human-centered.

How can user interfaces be augmented with intelligence to support interaction?

Gaze & Multimodal Interaction

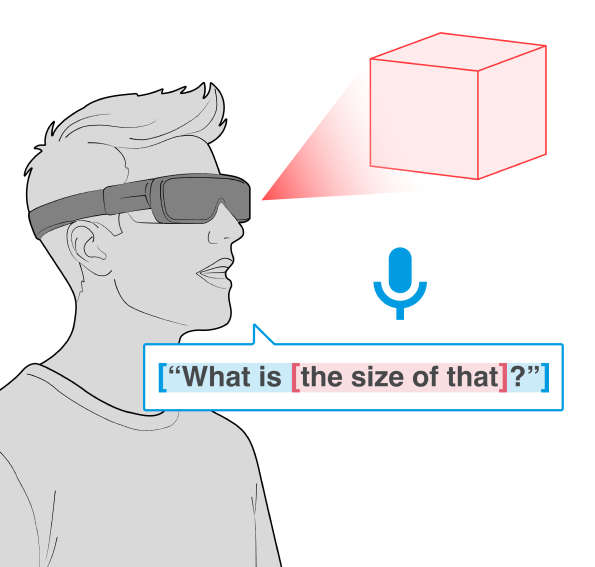

I investigate how multiple input modalities—such as gaze and speech—can be combined to enable more natural and expressive interaction. My work focuses on implicit and multimodal interaction, where systems infer user intent from natural behavior rather than relying solely on explicit input.

Key publications

- Gaze and speech in multimodal HCI: A scoping review — CHI 2026

- Integrating gaze and speech for enabling implicit interactions — CHI 2022

- GAVIN: Gaze-assisted voice-based implicit note-taking — TOCHI 2021

Reading, Note-Taking & Digital Learning

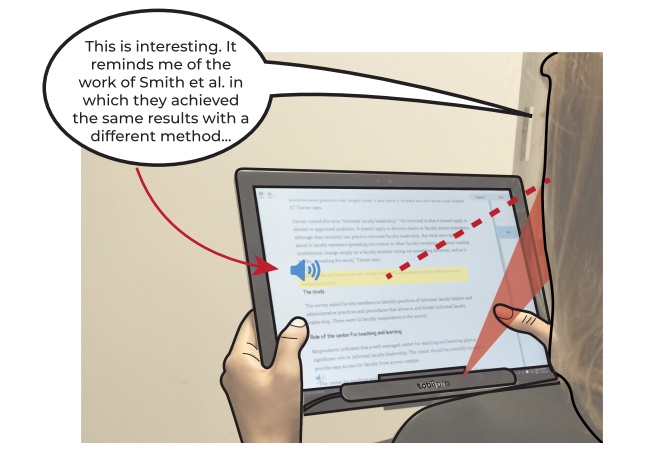

This work investigates how input modality, task difficulty, and interaction design shape learning and knowledge retention in digital environments, with the goal of designing tools that actively support learning rather than simply digitize existing workflows.

Key publications

- To type or to speak? Input modality and comprehension — CHI 2022

- How Difficult is the Task for you? Modelling and Analysis of Students' Task Difficulty Sequences in a Simulation-Based POE Environment — IJAIED 2022

- GAVIN: Gaze-assisted voice-based implicit note-taking — TOCHI 2021

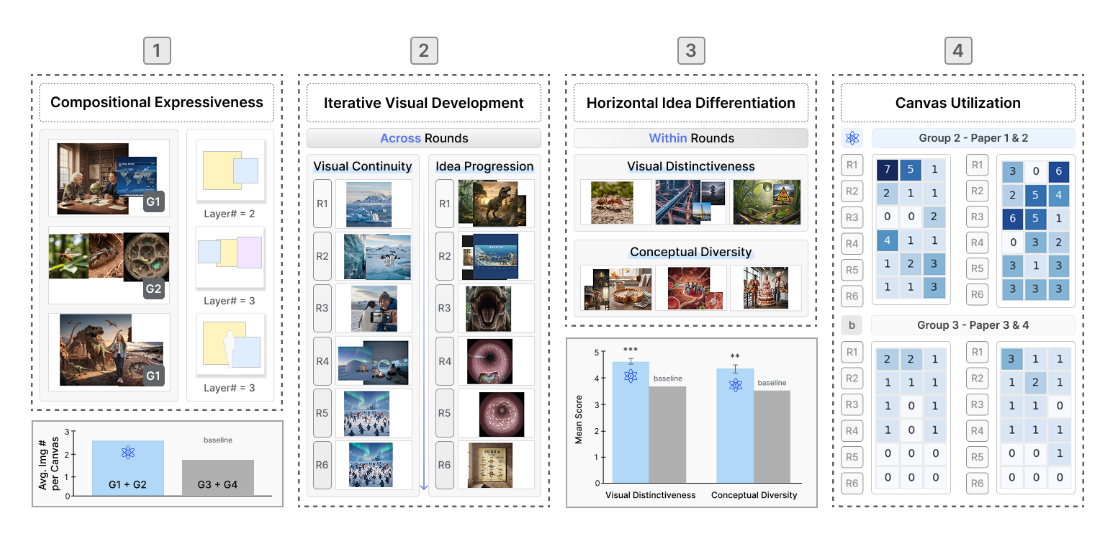

Creativity Support Tools

This work explores how intelligent systems can support creativity and expressive work, particularly in immersive environments. It focuses on designing tools that augment ideation, collaboration, and creative workflows using AI and XR technologies, extending my prior work in interaction and user modeling toward systems that enhance human expression.

Selected work

- Atomix: augmenting brainsketching through generative visual outputs — C&C 2026 (In-press)

- Understanding XR-Supported Creative Collaboration through a Domain-Specific Lens — HCI Korea 2026

- Passthrough Interpretive Assistant: Revealing Hidden Intent and Bias in eXtended Reality with AI — HCI Korea 2026

What insights about human behavior can be derived from interaction and sensor data?

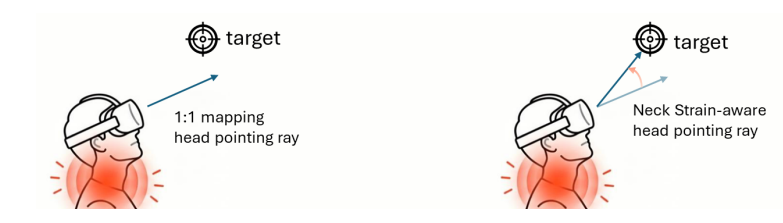

Eye–Head Dynamics in XR

This research investigates how eye and head movement coordinate dynamically and how this coordination can be leveraged to design efficient, hands-free interaction techniques. This work introduces methods for distinguishing gaze and head intent, modeling attention shifts, and enabling adaptive interaction techniques that respond to user behavior in real time.

Key publications

- Directed or Guided? Classification of Gaze Attention Shifts based on Eye and Head Movement — ETRA 2026

- GazeSwitch: Automatic Eye-Head Mode Switching for Optimised Hands-Free Pointing — ETRA 2024

- Classifying head movements to separate head-gaze and head gestures as distinct modes of input — CHI 2022

Physiological & Sensor-Based User Modeling

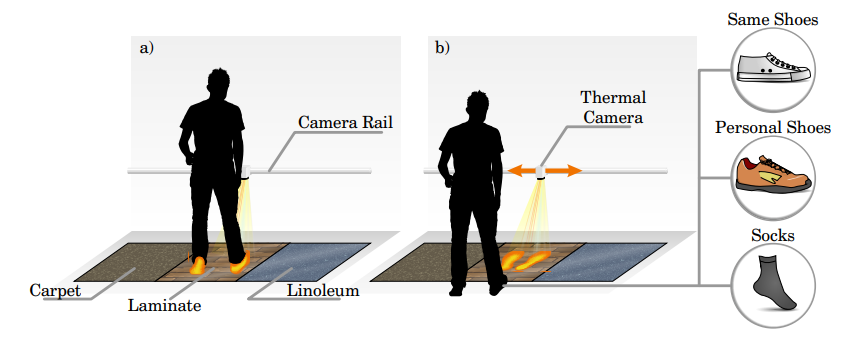

This work explores how physiological and behavioral signals can be used to infer users’ identity, cognitive, and affective states. It leverages modalities such as eye tracking, electrodermal activity (EDA), and thermal imaging to detect attention, cognitive load, and mind wandering. The goal is to enable adaptive, context-aware systems that respond intelligently to users’ internal states and support more effective interaction.

Key publications

- HotFoot: Foot-Based User Identification Using Thermal Imaging — CHI 2022

- Mind Wandering in a Multimodal Reading Setting: Behavior Analysis & Automatic Detection Using Eye-Tracking and an EDA Sensor — Sensors 2022

- Classifying Attention Types with Thermal Imaging and Eye Tracking — IMWUT 2019

Full Publications

For a complete list of publications, see my Google Scholar.